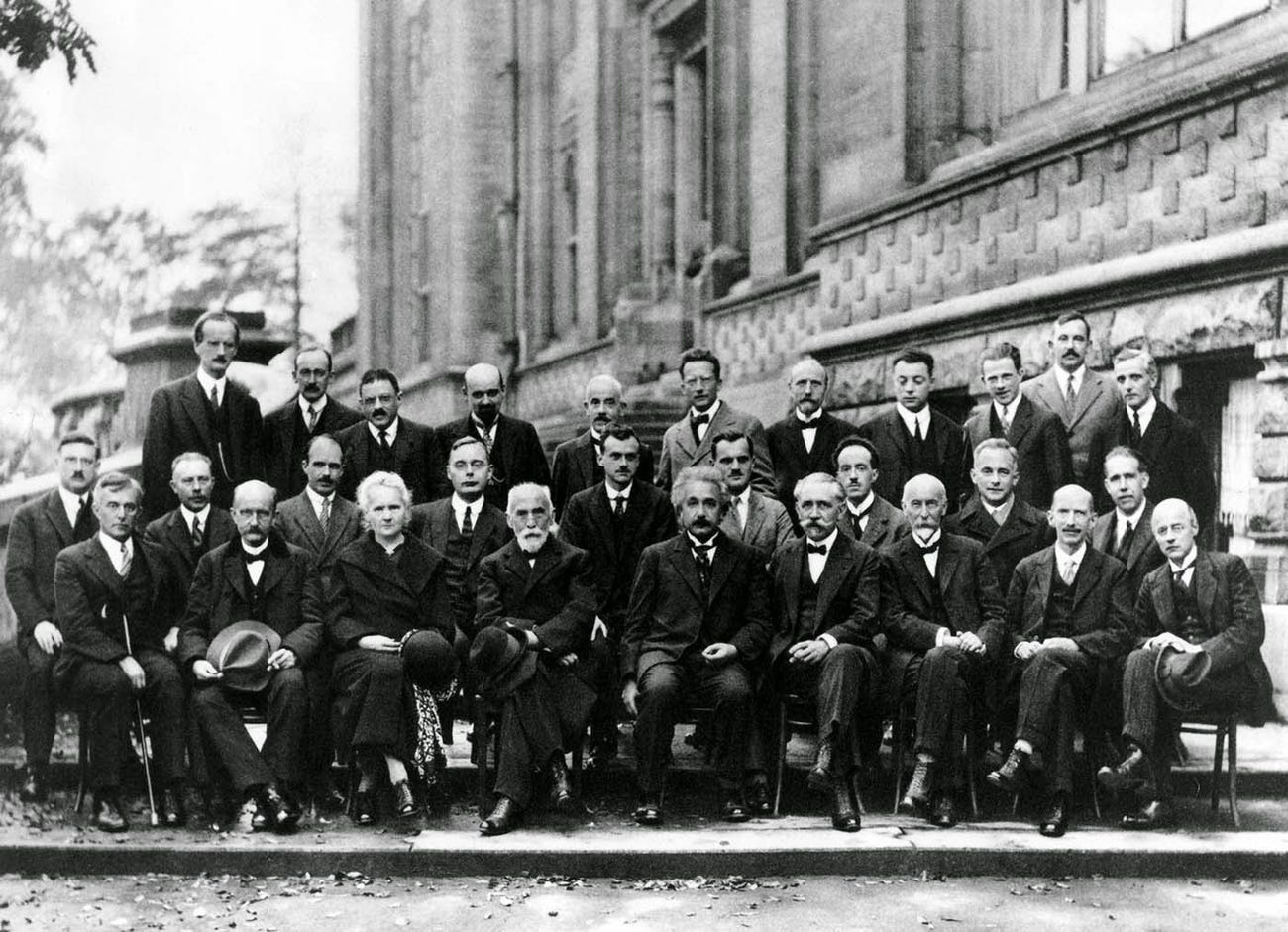

The flap of a butterfly’s wings on one side of the world can cause a hurricane on the other, or so they say. If we take it a bit too literally, that old observation may make us wonder what a hurricane can cause. Or if not a hurricane, how about another kind of large-scale natural disaster? If new findings by researchers from the University of Cambridge and the Leibniz Institute for the History and Culture of Eastern Europe are to be believed, a volcano’s eruption helped lead to the outbreak and spread of the Black Death across Europe in the fourteenth century. In the video above, British history and environmental science specialist Paul Whitewick explains the evidence on a visit to one of the abandoned medieval villages stricken by that plague.

As Cambridge’s Sarah Collins writes, “the evidence suggests that a volcanic eruption — or cluster of eruptions — around 1345 caused annual temperatures to drop for consecutive years due to the haze from volcanic ash and gases, which in turn caused crops to fail across the Mediterranean region.” Desperate Italian city-states thus fell back on trading with grain producers around the Black Sea. “This climate-driven change in long-distance trade routes helped avoid famine, but in addition to life-saving food, the ships were carrying the deadly bacterium that ultimately caused the Black Death, enabling the first and deadliest wave of the second plague pandemic to gain a foothold in Europe.”

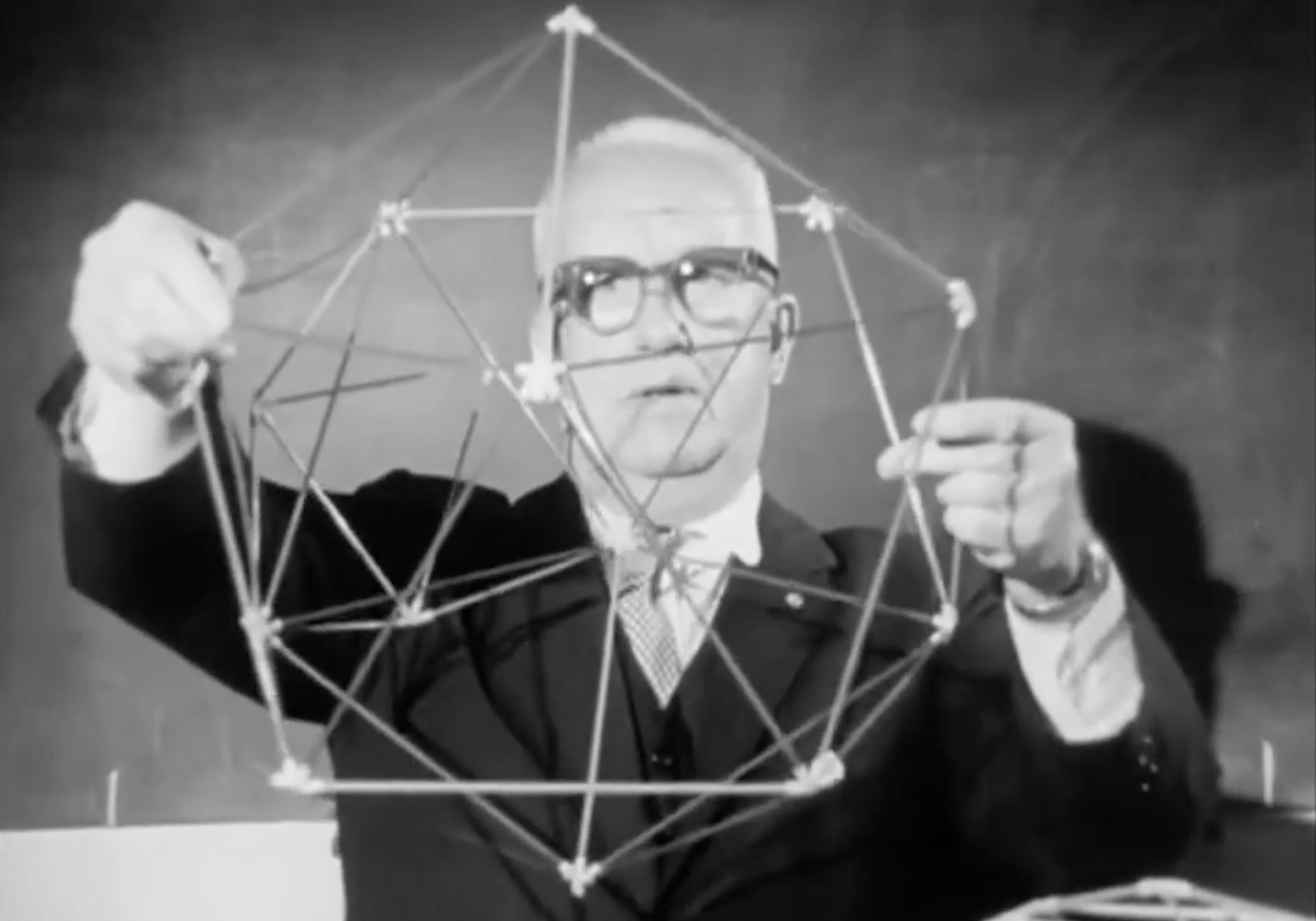

An important clue came in the form of “information contained in tree rings from the Spanish Pyrenees, where consecutive ‘Blue Rings’ point to unusually cold and wet summers in 1345, 1346 and 1347 across much of southern Europe.” Records of lunar eclipses and layers of sulfur locked into ice cores dating to about the same time further heighten the probability of volcanic activity. Key to tying these disparate pieces of evidence together are changes in trade routes: on a map, Whitewick traces “movement increasing along these corridors, grain imports to the maritime republics of Venice and Genoa from north of the Black Sea and beyond, in 1347.” According to written records, the Black Death came to Britain the following year, arriving in “a country already shaped by failed harvests, weakened communities, and rising movement of people and goods.”

Some communities weathered the plague and, in the fullness of time, even bounced back; others, like the village amid whose remains Whitewick stands, practically vanished altogether. “This was a global problem that became very much a local one,” he says, underscoring its revelation of the risk factors present even in the early stages of what we now call globalization. “A volcanic eruption thousands of miles away altered climate patterns, and that climate reshaped harvest and trade, and trade carried disease. And here, in the quiet English fields, the consequences have settled into the ground:” not quite as poetic an image as the butterfly and the hurricane, granted, but hardly less relevant to our own world for it.

Related Content:

The History of the Plague: Every Major Epidemic in an Animated Map

A 1665 Advertisement Promises a “Famous and Effectual” Cure for the Great Plague

1,000 Years of Medieval European History in 20 Minutes

Based in Seoul, Colin Marshall writes and broadcasts on cities, language, and culture. He’s the author of the newsletter Books on Cities as well as the books 한국 요약 금지 (No Summarizing Korea) and Korean Newtro. Follow him on the social network formerly known as Twitter at @colinmarshall.