In the nineteen-nineties, Quentin Tarantino and Robert Rodriguez first collaborated on a movie. No, it wasn’t From Dusk Till Dawn, the Rodriguez-directed crime-picture-turned-horror-comedy in which Tarantino plays George Clooney’s psychotic brother. It was an anthology picture called Four Rooms, whose separate but interconnected stories, all set in the same hotel on New Year’s Eve, were directed by an all-star lineup of the “Indiewood” auteurs of 1995: Tarantino, Rodriguez, Allison Anders, and Alexandre Rockwell. Rodriguez jumped at the chance to do short-form work and collaborate with friends, but alas, the concept inspired much more enthusiasm from moviegoers than the result, to say nothing of the critics’ judgment.

“Anthologies never work,” Rodriguez said last year during an interview with Lex Fridman. Even with the best filmmakers participating, “they bomb because people can’t quite wrap their head around it”: they feel like the movie keeps starting over and over again. Yet in the fullness of time, Four Rooms took his career up a level, not down.

“I really want this anthology thing to work,” he says, explaining his mindset about a decade after that film’s failure. “What if it’s three stories, like a three-act structure, not four, same director, not four different directors?” After all, “I had already done one and figured out how I could do it better.” The result was Sin City, from 2005, his adaptation of Frank Miller’s acclaimed noir comic-book series co-directed with Miller himself.

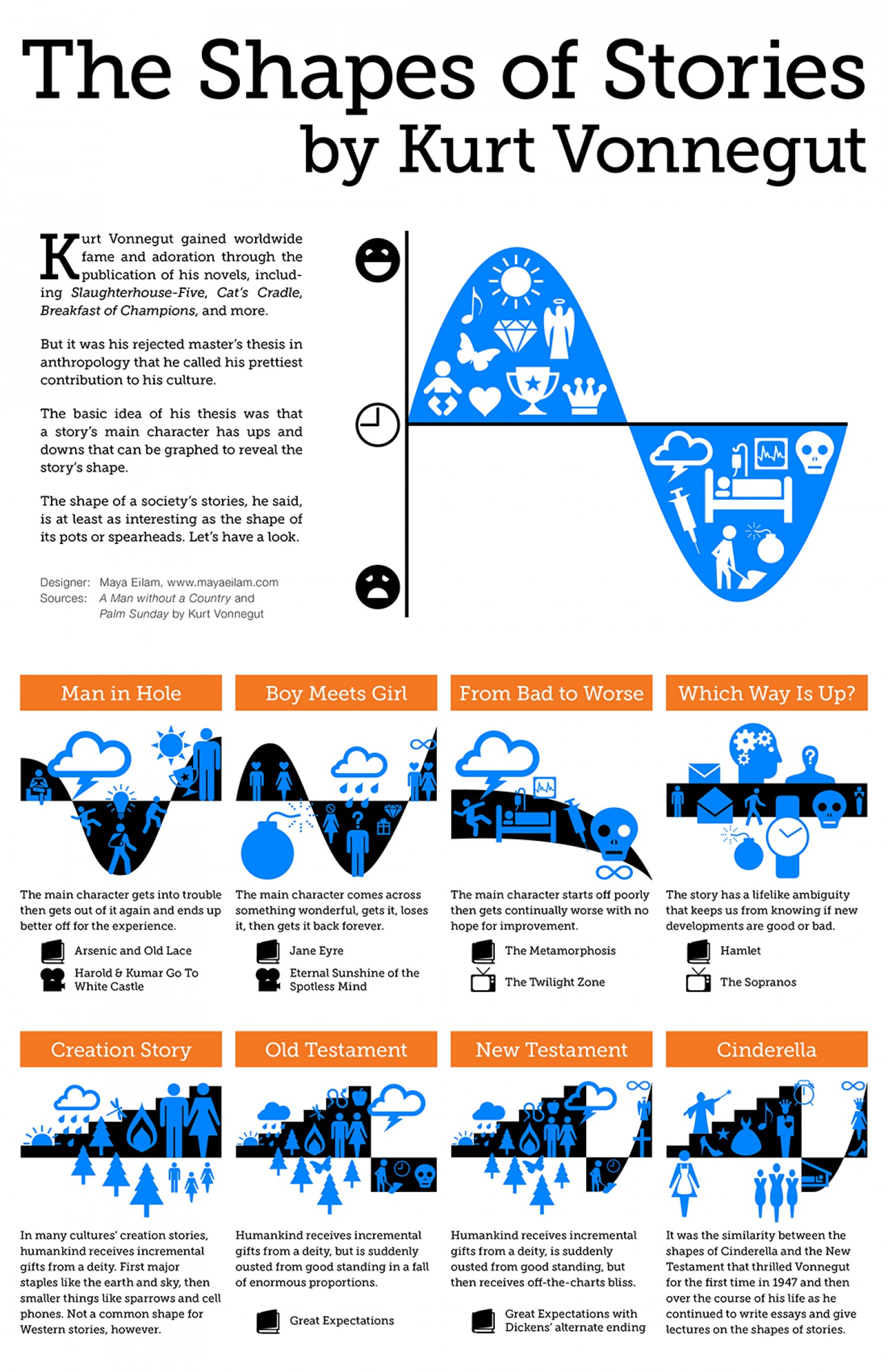

By now, comic-book movies, or at least movies that make use of intellectual property drawn from comic books, have long been commonplace. What Rodriguez and Miller made two decades ago was something different: a film that looked and felt just like its source material. As Danny Boyd explains in the CinemaStix video at the top of the post, Sin City was “not an adaptation, but a translation,” which Rodriguez thought of less as bringing the page to the screen than “taking cinema and turning it into a book.” Ironically, Miller had meant to avoid the whole Hollywood development process by deliberately making the original comics as un-filmable as possible — he just hadn’t reckoned on what technology and Rodriguez’s D.I.Y. ethos would eventually make possible.

Having famously broken into Hollywood with his debut feature El Mariachi, the “$7,000 movie” on which he performed all technical duties, Rodriguez understood how digital filmmaking could empower individual creators. The green screen, which enables the placement of real actors into any setting imaginable, promised him a way to re-create the “layers of unreality” that constitute a flamboyantly stylized work of ultra-noir like Sin City. In the video just above, Boyd shows us how green-screen shooting made it possible to realize the comic’s elaborate aesthetic in motion, creating not a cheap substitute for real sets and locations, as has since become dispiritingly common in Hollywood, but another reality altogether. And if you can bring Quentin Tarantino in to guest-direct a sequence, as Rodriguez did, so much the better.

Related Content:

Director Robert Rodriguez Teaches The Basics of Filmmaking in Under 10 Minutes

How the “Marvelization” of Cinema Accelerates the Decline of Filmmaking

When a Modern Director Makes a Fake Old Movie: A Video Essay on David Fincher’s Mank

The Essential Elements of Film Noir Explained in One Grand Infographic

Every Spider-Man Movie and TV Show Explained By Kevin Smith

Nigerian Teenagers Are Making Slick Sci Fi Films With Their Smartphones

Based in Seoul, Colin Marshall writes and broadcasts on cities, language, and culture. He’s the author of the newsletter Books on Cities as well as the books 한국 요약 금지 (No Summarizing Korea) and Korean Newtro. Follow him on the social network formerly known as Twitter at @colinmarshall.