Image of Ancient Egyptian Dentistry, via Wikimedia Commons

When we assume that modern improvements are far superior to the practices of the ancients, we might do well to actually learn how people in the distant past lived before indulging in “chronological snobbery.” Take, for example, the area of dental hygiene. We might imagine the ancient Greeks or Egyptians as prone to rampant tooth decay, lacking the benefits of packaged, branded toothpaste, silken ribbons of floss, astringent mouthwash, and ergonomic toothbrushes. But in fact, as toothpaste manufacturer Colgate points out, “the basic fundamentals” of toothbrush design “have not changed since the times of the Egyptians and Babylonians—a handle to grip, and a bristle-like feature with which to clean the teeth.” And not only did ancient people use toothbrushes, but it is believed that “Egyptians… started using a paste to clean their teeth around 5000 BC,” even before toothbrushes were invented.

In 2003, curators at a Viennese museum discovered “the world’s oldest-known formula for toothpaste,” writes Irene Zoech in The Telegraph, “used more than 1,500 years before Colgate began marketing the first commercial brand in 1873.” Dating from the 4th century AD, the Egyptian papyrus (not shown above), written in Greek, describes a “powder for white and perfect teeth” that, when mixed with saliva, makes a “clean tooth paste.” The recipe is as follows, Zoech summarizes: “…one drachma of rock salt—measure equal to one hundredth of an ounce—two drachmas of mint, one drachma of dried iris flower and 20 grains of pepper, all of them crushed and mixed together.”

Zoech quotes Dentist Heinz Neuman, who remarked, “Nobody in the dental profession had any idea that such an advanced toothpaste formula of this antiquity existed.” Having tried the ancient recipe at a dental conference in Austria, he found it “not unpleasant”

It was painful on my gums and made them bleed as well, but that’s not a bad thing, and afterwards my mouth felt fresh and clean. I believe that this recipe would have been a big improvement on some of the soap toothpastes used much later.

Discovered among “the largest collection of ancient Egyptian documents in the world,” the document, says Hermann Harrauer, head of the papyrus collection at the National Library in Vienna, “was written by someone who’s obviously had some medical knowledge, as he used abbreviations for medical terms.”

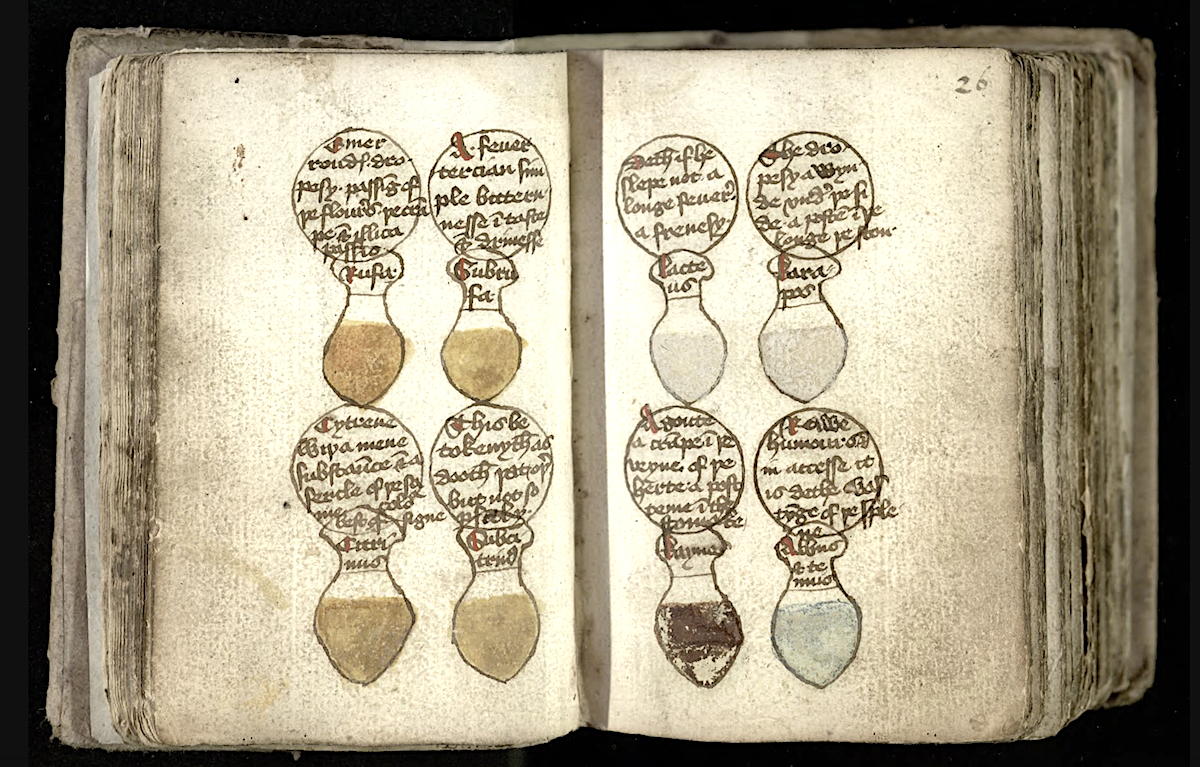

When we survey the dental remedies of Medieval England, we do indeed find that modern dental care is far better than much of what was available then. Most dental cures of the time, writes Trevor Anderson in a Nature article, “were based on herbal remedies, charms and amulets.” For example, in the 1314 Rosa Anglica, writer John of Gaddesden reports, “some say that the beak of a magpie hung from the neck cures pain in the teeth.” Another remedy involves sticking a needle into a “many footed worm which rolls up in a ball when you touch it.” Touch the aching tooth with that roly-poly needle and “the pain will be erased.”

However, “there is also documentary evidence,” writes Anderson, “for powders to clean teeth and attempts at filling carious cavities,” as well as some surgical intervention. In Gilbertus Anglicus’ 13th century Compendium of Medicine, readers are told to rub teeth and gums with cloth after eating to ensure that “no corrupt matter abides among the teeth.” In The Trotula—a compendium of folk remedies from the 11th or 12th century—we find many recipes for what we might consider toothpaste, though their efficacy is dubious. Danièle Cybulskie at Medievalists.net quotes one recipe “for black teeth”:

…take walnut shells well cleaned of the interior rind, which is green, and… rub the teeth three times a day, and when they have been well rubbed… wash the mouth with warm wine, and with salt mixed if desired.

Another, more extravagant, recipe sounds impractical.

Take burnt white marble and burnt date pits, and white natron, a red tile, salt, and pumice. From all of these make a powder in which damp wool has been wrapped in a fine linen cloth. Rub the teeth inside and out.

Yet a third recipe gives us a luxury variety, its ingredients well out of reach of the average person. We are assured, however, that this formula “works the best.”

Take some each of cinnamon, clove, spikenard, mastic, frankincense, grain, wormwood, crab foot, date pits, and olives. Grind all of these and reduce them to a powder, then rub the affected places.

Whether any of these formulas would have worked at all, I cannot say, but they likely worked better than charms and amulets. In any case, while medieval European texts tend to confirm certain of our ideas about poor dental hygiene of the past, it seems that the daily practices of more ancient peoples in Egypt and elsewhere might have been much more like our own than we would suspect.

Note: An earlier version of this post appeared on our site in 2016.

Related Content:

Why the Ancient Romans Had Better Teeth Than Modern Europeans

Who Really Built the Egyptian Pyramids—And How Did They Do It?

Discover the Oldest Beer Recipe in History From Ancient Sumeria, 1800 B.C.

An Ancient Egyptian Homework Assignment from 1800 Years Ago: Some Things Are Truly Timeless

Josh Jones is a writer and musician based in Durham, NC.