Cindy Sherman’s Instagram Account Goes Public, Revealing 600 New Photos & Many Strange Self-Portraits

The career of Jenny Holzer, the artist who became famous in the 1970s and 80s through her public installations of phrases like “ABUSE OF POWER COMES AS NO SURPRISE” and “PROTECT ME FROM WHAT I WANT,” has made her into an ideal Tweeter. By the same token, the career of Cindy Sherman, the artist who became famous in the 1970s and 80s through her inventive not-exactly-self-portraits — pictures of herself elaborately remade as a variety of other people, including other famous people, in a variety of time periods — has made her into an ideal Instagrammer.

But though Sherman had been using Instagram for quite some time, most of the public had no idea she had any presence there at all until just this week. “The account, which mysteriously switched from private to public in recent months, is a mix of personal photos alongside Sherman’s ever-famous manipulated images of herself,” reports Artnet’s Caroline Elbaor.

“What we see here is somewhat of a departure from the artist’s traditional model: the frame is tighter and closer to her face, in what is clear use of a phone’s front-facing camera. Plus, the subject matter is decidedly intimate in comparison to her usual work — the latest posts document a stay in the hospital. She may even be having fun with filters.”

She apparently started having fun with them a few months ago, from one May post whose photo she describes as “Selfie! No filter, hahaha” — but in which she does seem to have made use of certain effects to give the image a few of the suite of uncanny qualities in which she specializes. Though not a member of the generations the world most closely associates with avid selfie-taking, Sherman brings a uniquely rich experience with the form, or forms like it. Her “method of turning the lens onto herself is uncannily appropriate to our times,” writes Elbaor,” in which the stage-managed selfie has become so ubiquitous that it’s now fodder for exhibitions and often cited as an art form in itself.”

Sherman’s Instagram self-portraiture, in contrast to the often (but not always) glamorous productions that hung on the walls of her shows before, has entered fascinating new realms of strangeness and even grotesquerie. Using the image-modification tools so many of us might previously assumed were used only by teenage girls desperate to erase their imagined flaws, Sherman twists and bends her own features into what look like living cartoon characters. “A bit scary,” one commenter wrote of Sherman’s recent hospital-bed selfie (taken while recovering from a fall from a horse), “but I can’t look away.” Many of the artist’s thousands and thousands of new and captivated Instagram followers are surely reacting the same way. Check out Sherman’s Instagram feed here.

Related Content:

Say What You Really Mean with Downloadable Cindy Sherman Emoticons

Museum of Modern Art (MoMA) Launches Free Course on Looking at Photographs as Art

See The First “Selfie” In History Taken by Robert Cornelius, a Philadelphia Chemist, in 1839

Based in Seoul, Colin Marshall writes and broadcasts on cities and culture. He’s at work on the book The Stateless City: a Walk through 21st-Century Los Angeles, the video series The City in Cinema, the crowdfunded journalism project Where Is the City of the Future?, and the Los Angeles Review of Books’ Korea Blog. Follow him on Twitter at @colinmarshall or on Facebook.

Read More...Marshall McLuhan Predicts That Electronic Media Will Displace the Book & Create Sweeping Changes in Our Everyday Lives (1960)

“The electronic media haven’t wiped out the book: it’s read, used, and wanted, perhaps more than ever. But the role of the book has changed. It’s no longer alone. It no longer has sole charge of our outlook, nor of our sensibilities.” As familiar as those words may sound, they don’t come from one of the think pieces on the changing media landscape now published each and every day. They come from the mouth of midcentury CBC television host John O’Leary, introducing an interview with Marshall McLuhan more than half a century ago.

McLuhan, one of the most idiosyncratic and wide-ranging thinkers of the twentieth century, would go on to become world famous (to the point of making a cameo in Woody Allen’s Annie Hall) as a prophetic media theorist. He saw clearer than many how the introduction of mass media like radio and television had changed us, and spoke with more confidence than most about how the media to come would change us. He understood what he understood about these processes in no small part because he’d learned their history, going all the way back to the development of writing itself.

Writing, in McLuhan’s telling, changed the way we thought, which changed the way we organized our societies, which changed the way we perceived things, which changed the way we interact. All of that holds truer for the printing press, and even truer still for television. He told the story in his book The Gutenberg Galaxy, which he was working on at the time of this interview in May of 1960, and which would introduce the term “global village” to its readers, and which would crystallize much of what he talked about in this broadcast. Electronic media, in his view, “have made our world into a single unit.”

With this “continually sounding tribal drum” in place, “everybody gets the message all the time: a princess gets married in England, and ‘boom, boom, boom’ go the drums. We all hear about it. An earthquake in North Africa, a Hollywood star gets drunk, away go the drums again.” The consequence? “We’re re-tribalizing. Involuntarily, we’re getting rid of individualism.” Where “just as books and their private point of view are being replaced by the new media, so the concepts which underlie our actions, our social lives, are changing.” No longer concerned with “finding our own individual way,” we instead obsess over “what the group knows, feeling as it does, acting ‘with it,’ not apart from it.”

Though McLuhan died in 1980, long before the appearance of the modern internet, many of his readers have seen recent technological developments validate his notion of the global village — and his view of its perils as well as its benefits — more and more with time. At this point in history, mankind can seem less united than ever than ever, possibly because technology now allows us to join any number of global “tribes.” But don’t we feel more pressure than ever to know just what those tribes know and feel just what they feel?

No wonder so many of those pieces that cross our news feeds today still reference McLuhan and his predictions. Just this past weekend, Quartz’s Lila MacLellan did so in arguing that our media, “while global in reach, has come to be essentially controlled by businesses that use data and cognitive science to keep us spellbound and loyal based on our own tastes, fueling the relentless rise of hyper-personalization” as “deep-learning powered services promise to become even better custom-content tailors, limiting what individuals and groups are exposed to even as the universe of products and sources of information expands.” Long live the individual, the individual is dead: step back, and it all looks like one of those contradictions McLuhan could have delivered as a resonant sound bite indeed.

Related Content:

McLuhan Said “The Medium Is The Message”; Two Pieces Of Media Decode the Famous Phrase

The Visionary Thought of Marshall McLuhan, Introduced and Demystified by Tom Wolfe

Based in Seoul, Colin Marshall writes and broadcasts on cities and culture. He’s at work on the book The Stateless City: a Walk through 21st-Century Los Angeles, the video series The City in Cinema, the crowdfunded journalism project Where Is the City of the Future?, and the Los Angeles Review of Books’ Korea Blog. Follow him on Twitter at @colinmarshall or on Facebook.

Read More...Watch Randy Newman’s Tour of Los Angeles’ Sunset Boulevard, and You’ll Love L.A. Too

“The longer I live here,” a Los Angeles-based friend recently said, “the more ‘I Love L.A.’ sounds like an unironic tribute to this city.” That hit single by Randy Newman, a singer-songwriter not known for his simple earnestness, has produced a multiplicity of interpretations since it came out in 1983, the year before Los Angeles presented a sunny, colorful, forward-looking image to the world as the host of the Summer Olympic Games. Listeners still wonder now what they wondered back then: when Newman sings the praises — literally — of the likes of Imperial Highway, a “big nasty redhead,” Century Boulevard, the Santa Ana winds, and bums on their knees, does he mean it?

“I Love L.A.“ ‘s both smirking and enthusiastic music video offers a view of Newman’s 1980s Los Angeles, but fifteen years later, he starred in an episode of the public television series Great Streets that presents a slightly more up-to-date, and much more nuanced, picture of the city. In it, the native Angeleno looks at his birthplace through the lens of the 27-mile Sunset Boulevard, Los Angeles’ most famous street — or, in his own words, “one of those places the movies would’ve had to invent, if it didn’t already exist.”

Historian Leonard Pitt (who appears alongside figures like filmmaker Allison Anders, artist Ed Ruscha, and Doors keyboardist Ray Manzarek) describes Sunset as the one place along which you can see “every stratum of Los Angeles in the shortest period of time.” Or as Newman puts it, “Like a lot of the people who live here, Sunset is humble and hard-working at the beginning,” on its inland end. “Go further and it gets a little self-indulgent and outrageous” before it “straightens itself out and grows rich, fat, and respectable.” At its coastal end “it gets real twisted, so there’s nothing left to do but jump into the Pacific Ocean.”

Newman’s westward journey, made in an open-topped convertible (albeit not “I Love L.A.“ ‘s 1955 Buick) takes him from Union Station (America’s last great railway terminal and the origin point of “L.A.‘s long, long-anticipated subway system”) to Aimee Semple McPherson’s Angelus Temple, now-gentrified neighborhoods like Silver Lake then only in mid-gentrification, the humble studio where he laid tracks for some of his biggest records, the corner where D.W. Griffith built Intolerance’s ancient Babylon set, the storied celebrity hideout of the Chateau Marmont, UCLA (“almost my alma mater”), the Lake Shrine Temple of the Self-Realization Fellowship, and finally to edge of the continent.

More recently, Los Angeles Times architecture critic Christopher Hawthorne traveled the entirety of Sunset Boulevard again, but on foot and in the opposite direction. The east-to-west route, he writes, “offers a way to explore an intriguing notion: that the key to deciphering contemporary Los Angeles is to focus not on growth and expansion, those building blocks of 20th century Southern California, but instead on all the ways in which the city is doubling back on itself and getting denser.” For so much of the city’s history, “searching for a metaphor to define Sunset Boulevard, writers” — or musicians or filmmakers or any number of other creators besides — “have described it as a river running west and feeding into the Pacific. But the river flows the other direction now.”

Los Angeles has indeed plunged into a thorough transformation since Newman first simultaneously celebrated and satirized it, but something of the distinctively breezy spirit into which he tapped will always remain. “There‘s some kind of ignorance L.A. has that I’m proud of. The open car and the redhead and the Beach Boys, the night just cooling off after a hot day, you got your arm around somebody,” he said to the Los Angeles Weekly a few years after taping his Great Streets tour. ”That sounds really good to me. I can‘t think of anything a hell of a lot better than that.”

Related Content:

Glenn Gould Gives Us a Tour of Toronto, His Beloved Hometown (1979)

Charles Bukowski Takes You on a Very Strange Tour of Hollywood

Join Clive James on His Classic Television Trips to Paris, LA, Tokyo, Rio, Cairo & Beyond

Based in Seoul, Colin Marshall writes and broadcasts on cities and culture. He’s at work on the book The Stateless City: a Walk through 21st-Century Los Angeles, the video series The City in Cinema, the crowdfunded journalism project Where Is the City of the Future?, and the Los Angeles Review of Books’ Korea Blog. Follow him on Twitter at @colinmarshall or on Facebook.

Read More...How Arabic Translators Helped Preserve Greek Philosophy … and the Classical Tradition

In the ancient world, the language of philosophy—and therefore of science and medicine—was primarily Greek. “Even after the Roman conquest of the Mediterranean and the demise of paganism, philosophy was strongly associated with Hellenic culture,” writes philosophy professor and History of Philosophy without any Gaps host Peter Adamson. “The leading thinkers of the Roman world, such as Cicero and Seneca, were steeped in Greek literature.” And in the eastern empire, “the Greek-speaking Byzantines could continue to read Plato and Aristotle in the original.”

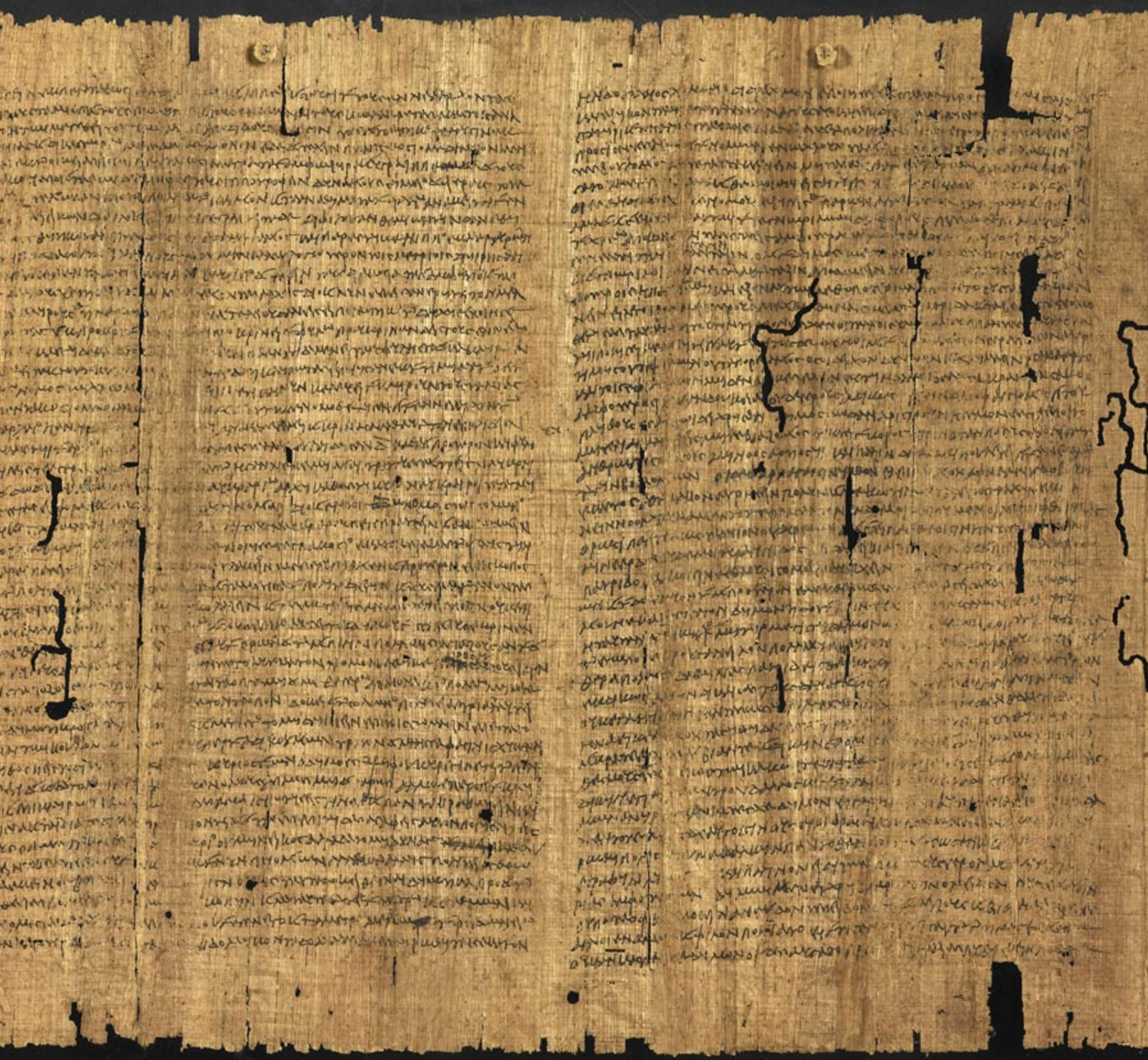

Greek thinkers also had significant influence in Egypt. During the building of the Library of Alexandria, “scholars copied and stored books that were borrowed, bought, and even stolen from other places in the Mediterranean,” writes Aileen Das, Professor of Mediterranean Studies at the University of Michigan. “The librarians gathered texts circulating under the names of Plato (d. 348/347 BCE), Aristotle and Hippocrates (c. 460–c. 370 BCE), and published them as collections.” The scroll above, part of an Aristotelian transcription of the Athenian constitution, was believed lost for hundreds of years until it was discovered in the 19th century in Egypt, in the original Greek. The text, writes the British Library, “has had a major impact in our knowledge of the development of Athenian democracy and the workings of the Athenian city-state in antiquity.”

Alexandria “rivalled Athens and Rome as the place to study philosophy and medicine in the Mediterranean,” and young men of means like the 6th century priest Sergius of Reshaina, doctor-in-chief in Northern Syria, traveled there to learn the tradition. Sergius “translated around 30 works of Galen [the Greek physician]” and other known and unknown philosophers and ancient scientists into Syriac. Later, as Syriac and Arabic came to dominate former Greek-speaking regions, the Greek texts became intense objects of focus for Islamic thinkers, and the caliphs spared no expense to have them translated and disseminated, often contracting with Christian and Jewish scholars to accomplish the task.

The transmission of Greek philosophy and medicine was an international phenomenon, which involved bilingual speakers from pagan, Christian, Muslim, and Jewish backgrounds. This movement spanned not only religious and linguistic but also geographical boundaries, for it occurred in cities as far apart as Baghdad in the East and Toledo in the West.

In Baghdad, especially, by the 10th century, “readers of Arabic,” writes Adamson, “had about the same degree of access to Aristotle that readers of English do today” thanks to a “well-funded translation movement that unfolded during the Abbasid caliphate, beginning in the second half of the eighth century.” The work done during the Abbasid period—from about 750 to 950—“generated a highly sophisticated scientific language and a massive amount of source material,” we learn in Harvard University Press’s The Classical Tradition. Such material “would feed scientific research for the following centuries, not only in the Islamic world but beyond it, in Greek and Latin Christendom and, within it, among the Jewish populations as well.”

Indeed this “Byzantine humanism,” as it’s called, “helped the classical tradition survive, at least to the large extent that it has.” As ancient texts and traditions disappeared in Europe during the so-called “Dark Ages,” Arabic and Syriac-speaking scholars and translators incorporated them into an Islamic philosophical tradition called falsafa. The motivations for fostering the study of Greek thought were complex. On the one hand, writes Adamson, the move was political; “the caliphs wanted to establish their own cultural hegemony,” in competition with Persians and Greek-speaking Byzantine Christians, “benighted as they were by the irrationalities of Christian theology.” On the other hand, “Muslim intellectuals also saw resources in the Greek texts for defending, and better understanding their own religion.”

One well-known figure from the period, al-Kindi, is thought to be the first philosopher to write in Arabic. He oversaw the translations of hundreds of texts by Christian scholars who read both Greek and Arabic, and he may also have added his own ideas to the works of Plotinus, for example, and other Greek thinkers. Like Thomas Aquinas a few hundred years later, al-Kindi attempted to “establish the identity of the first principle in Aristotle and Plotinus” as the theistic God. In this way, Islamic translations of Greek texts prepared the way for interpretations that “treat that principle as a Creator,” a central idea in Medieval scholastic philosophy and Catholic thought generally.

The translations by al-Kindi and his associates are grouped into what scholars call the “circle of al-Kindi,” which preserved and elaborated on Aristotle and the Neoplatonists. Thanks to al-Kindi’s “first set of translations,” notes the Stanford Encyclopedia of Philosophy, “learned Muslims became acquainted with Plato’s Demiurge and immortal soul; with Aristotle’s search for science and knowledge of the causes of all the phenomena on earth and in the heavens,” and many more ancient Greek metaphysical doctrines. Later translators working under physician and scientist Hunayn ibn Ishaq and his son “made available in Syriac and/or Arabic other works by Plato, Aristotle, Theophrastus, some philosophical writings by Galen,” and other Greek thinkers and scientists.

This tradition of translation, philosophical debate, and scientific discovery in Islamic societies continued into the 10th and 11th centuries, when Averroes, the “Islamic scholar who gave us modern philosophy,” wrote his commentary on the works of Aristotle. “For several centuries,” writes the University of Colorado’s Robert Pasnau, “a series of brilliant philosophers and scientists made Baghdad the intellectual center of the medieval world,” preserving ancient Greek knowledge and wisdom that may otherwise have disappeared. When it seems in our study of history that the light of the ancient philosophy was extinguished in Western Europe, we need only look to North Africa and the Near East to see that tradition, with its humanistic exchange of ideas, flourishing for centuries in a world closely connected by trade and empire.

Related Content:

Introduction to Ancient Greek History: A Free Online Course from Yale

Free Courses in Ancient History, Literature & Philosophy

Josh Jones is a writer and musician based in Durham, NC. Follow him at @jdmagness

Read More...Artists May Have Different Brains (More Grey Matter) Than the Rest of Us, According to a Recent Scientific Study

Image Photo courtesy of the Laboratory of Neuro Imaging at UCLA.

Sometimes—as in the case of neuroscience—scientists and researchers seem to be saying several contradictory things at once. Yes, opposing claims can both be true, given different context and levels of description. But which is it, Neuroscientists? Do we have “neuroplasticity”—the ability to change our brains, and therefore our behavior? Or are we “hard-wired” to be a certain way by innate structures.

The debate long predates the field of neuroscience. It figured prominently in the work, for example, of John Locke and other early modern theorists of cognition—which is why Locke is best known as the theorist of tabula rasa. In “Some Thoughts Concerning Education,” Locke mostly denies that we are able to change much at all in adulthood.

Personality, he reasoned, is determined not by biology, but in the “cradle” by “little, almost insensible impressions on our tender infancies.” Such imprints “have very important and lasting consequences.” Sorry, parents. Not only did your kid get wait-listed for that elite preschool, but their future will also be determined by millions of sights and sounds that happened around them before they could walk.

It’s an extreme, and unscientific, contention, fascinating as it may be from a cultural standpoint. Now we have psychedelic-looking brain scans popping up in our news feeds all the time, promising to reveal the true origins of consciousness and personality. But the conclusions drawn from such research are tentative and often highly contested.

So what does science say about the eternally mysterious act of artistic creation? The abilities of artists have long seemed to us godlike, drawn from supernatural sources, or channeled from other dimensions. Many neuroscientists, you may not be surprised to hear, believe that such abilities reside in the brain. Moreover, some think that artists’ brains are superior to those of mediocre ability.

Or at least that artists’ brains have more gray and white matter than “right-brained” thinkers in the areas of “visual perception, spatial navigation and fine motor skills.” So writes Katherine Brooks in a Huffington Post summary of “Drawing on the right side of the brain: A voxel-based morphometry analysis of observational drawing.” The 2014 study, published at NeuroImage, involved a very small sampling of graduate students, 21 of whom were artists, 23 of whom were not. All 44 students were asked to complete drawing tasks, which were then scored and compared to images of their brain taken by a method called “voxel-based morphometry.”

“The people who are better at drawing really seem to have more developed structures in regions of the brain that control for fine motor performance and what we call procedural memory,” the study’s lead author, Rebecca Chamberlain of Belgium’s KU Leuven University, told the BBC. (Hear her segment on BBC Radio 4’s Inside Science here.) Does this mean, as Artnet News claims in their quick take, that “artists’ brains are more fully developed?”

It’s a juicy headline, but the findings of this limited study, while “intriguing,” are “far from conclusive.” Nonetheless, it marks an important first step. “No studies” thus far, Chamberlain says, “have assessed the structural differences associated with representational skills in visual arts.” Would a dozen such studies resolve questions about causality–nature or nurture? As usual, the truth probably lies somewhere in-between.

At Smithsonian, Randy Rieland quotes several critics of the neuroscience of art, which has previously focused on what happens in the brain when we look at a Van Gogh or read Jane Austen. The problem with such studies, writes Philip Ball at Nature, is that they can lead to “creating criteria of right or wrong, either in the art itself or in individual reactions to it.” But such criteria may already be predetermined by culturally-conditioned responses to art.

The science is fascinating and may lead to numerous discoveries. It does not, as the Creators Project writes hyperbolically, suggest that “artists actually are different creatures from everyone else on the planet.” As University of California philosopher professor Alva Noe states succinctly, one problem with making sweeping generalizations about brains that view or create art is that “there can be nothing like a settled, once-and-for-all account of what art is.”

Emerging fields of “neuroaesthetics” and “neurohumanities” may muddy the waters between quantitative and qualitative distinctions, and may not really answer questions about where art comes from and what it does to us. But then again, given enough time, they just might.

Related Content:

This Is Your Brain on Jane Austen: The Neuroscience of Reading Great Literature

The Neuroscience of Drumming: Researchers Discover the Secrets of Drumming & The Human Brain

The Neuroscience & Psychology of Procrastination, and How to Overcome It

Josh Jones is a writer and musician based in Durham, NC. Follow him at @jdmagness

Read More...Manchester Benefit Concert Is Streaming Live Now

Just a quick fyi: The Manchester Benefit concert is happening now, and streaming live on YouTube. Coldplay, Pharrell Williams, Justin Bieber, Katy Perry, Miley Cyrus, Niall Horan, Usher, and Ariana Grande will all perform. Click play above to stream the live video feed.

If you would like to support the mission of Open Culture, consider making a donation to our site. It’s hard to rely 100% on ads, and your contributions will help us continue providing the best free cultural and educational materials to learners everywhere. You can contribute through PayPal, Patreon, and Venmo (@openculture). Thanks!

Read More...How Finland Created One of the Best Educational Systems in the World (by Doing the Opposite of U.S.)

Every conversation about education in the U.S. takes place in a minefield. Unless you’re a billionaire who bought the job of Secretary of Education, you’d better be prepared to answer questions about racial and economic equity, disability issues, protections for LGBTQ students, teacher pay and unions, religious charter schools, and many other pressing concerns. These issues are not mutually exclusive, nor are they distinct from questions of curriculum, testing, or achievement. The terrain is littered with possible explosive conflicts between educators, parents, administrators, legislators, activists, and profiteers.

The needs of the most deeply invested stakeholders, as they say, the students themselves, seem to get far too little consideration. What if we in the U.S., all of us, actually wanted to improve the educational experiences and academic outcomes for our children—all of them? Where might we look for a model? Many people have looked to Finland, at least since 2010, when the documentary Waiting for Superman contrasted struggling U.S. public schools with highly successful Finnish equivalents.

The film, a positive spin on the charter school movement, received significant backlash for its cherry-picked examples and blaming of teachers’ unions for America’s failing schools. By contrast, Finland’s schools have been described by William Doyle, an American Fulbright Scholar who studies them, as “the ‘ultimate charter school network’ ” (a phrase, we’ll see, that means little in the Finnish context.) There, Doyle writes at The Hechinger Report, “teachers are not strait-jacketed by bureaucrats, scripts or excessive regulations, but have the freedom to innovate and experiment as teams of trusted professionals.”

Last year, Michael Moore featured many of Finland’s innovative educational experiments in his humorous, hopeful travelogue Where to Invade Next. In the clip above, you can hear from the country’s Minister of Education, Krista Kiuru, who explains to him why Finnish children do not have homework; hear also from a group of high school students, high school principal Pasi Majassari, first grade teacher Anna Hart and many others. Shorter school hours—the “shortest school days and shortest school years in the entire Western world”—leave plenty of time for leisure and recreation. Kids bake, hike, build things, make art, conduct experiments, sing, and generally enjoy themselves.

“There are no mandated standardized tests,” writes LynNell Hancock at Smithsonian, “apart from one exam at the end of students’ senior year in high school… there are no rankings, no comparisons or competition between students, schools or regions.” Yet Finnish students have, in the past several years, consistently ranked in the top ten among millions of students worldwide in science, reading, and math. “If there was one thing I kept hearing over and over again from the Finns,” says Moore above, “it’s that America should get rid of standardized tests,” should stop teaching to those tests, stop designing entire curricula around multiple-choice tests. Hancock describes the results of the Finnish system, and its costs:

Ninety-three percent of Finns graduate from academic or vocational high schools, 17.5 percentage points higher than the United States, and 66 percent go on to higher education, the highest rate in the European Union. Yet Finland spends about 30 percent less per student than the United States.

Moore’s camera registers the shock on Finnish educators’ faces when they hear that many U.S. schools eliminated music, art, poetry and other pursuits in order to focus almost exclusively on testing. Though lighthearted in tone, the segment really drives home the depressing degree to which so many U.S. students receive an impoverished education—one barely worthy of the name—unless they luck into a voucher for a high-end charter school or have the independent means for an expensive private one. In Finland, says the Minister of Education, “all the schools are equal. You never ask where the best school is.”

It’s also illegal in Finland to profit from schooling. Wealthy parents have to ensure that neighborhood schools can give their kids the best education possible, because they are the only option. Many people in the U.S. object to comparisons like Moore’s by noting that societies like Finland are “homogenous” next to what may seem to them like maddening cultural diversity in the U.S. However, Finland has incorporated (not without difficulty) large immigrant and refugee populations—even as its schools continue to improve. The government has responded in part to rising immigration with educational solutions such as this one, a “national initiative to reinforce Finnish higher education institutions (HEIs) as significant stakeholders in migrants’ integration.”

The subtantive differences between the two countries’ educational systems may have less to do with demography and more to do with economics and the training and social status of teachers.

In Finland, writes Doyle, no teacher “is allowed to lead a primary school class without a master’s degree in education, with specialization in research and classroom practice.” Teaching “is the most admired job in Finland next to medical doctors.” And as Dana Goldstein points out at The Nation—a fact Waiting for Superman failed to mention—Finnish teachers are “gasp!—unionized and granted tenure.” Perhaps an even more significant difference the documentary glossed over: in Finland, “families benefit from a cradle-to-grave social welfare system that includes universal daycare, preschool and healthcare, all of which are proven to help children achieve better results at school.”

Hundreds of studies in recent years substantiate this claim. It would seem intuitive that stresses associated with hunger and poverty would have a pernicious effect on learning, especially when poorer schools are so egregiously under-resourced. And the data says as much, to varying degrees. And yet, we are now in the U.S. slashing breakfast and lunch programs that feed hungry children and deciding whether to uninsure millions of families as millions more still lack basic health coverage. Most every American parent knows that quality daycares and preschools can cost as much per year as a decent university education in this country.

It seems to many of us that the atrocious state of the U.S. educational system can only be attributed to an act of will on the part our political elite, who see schools as competition for fundamentalist belief systems, opportunities to punish their opponents out of spite, or as rich fields for private profit. But it needn’t be so. It took 40 years for the Finns to create their current system. In the 1960s, their schools ranked on the very low end—along with those in the U.S. By most accounts, they’ve since shown there can be systems that, while surely imperfect in their own way, work for all kids, embedded within larger systems that prize their teachers and families.

Related Content:

In Japanese Schools, Lunch Is As Much About Learning As It’s About Eating

Josh Jones is a writer and musician based in Durham, NC. Follow him at @jdmagness

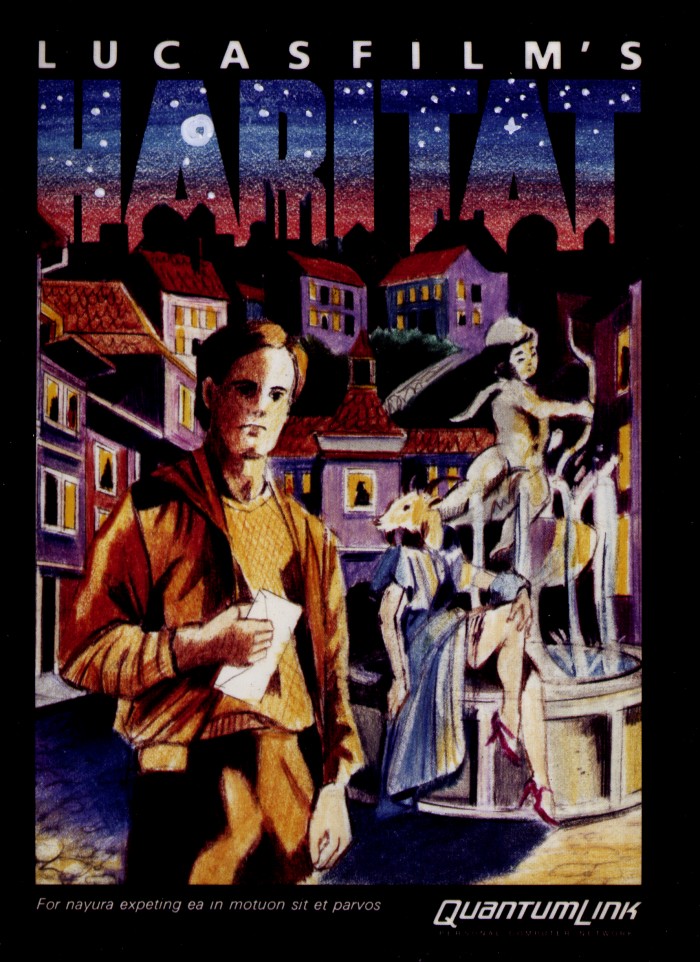

Read More...The Story of Habitat, the Very First Large-Scale Online Role-Playing Game (1986)

Long before World of Warcraft, before Everquest and Second Life, and even before Ultima Online, computer-gamers of the 1980s looking for an online world to explore with others of their kind could fire up their Commodore 64s, switch on their dial-up modems, and log into Habitat. Brought out for the Commodore online service Quantum Link by Lucasfilm Games (later known as the developer of such classic point-and-click adventure games as Maniac Mansion and The Secret of Monkey Island, now known as Lucasarts), Habitat debuted as the very first large-scale graphical virtual community, blazing a trail for all the massively multiplayer online role-playing games (or MMORPGs) so many of us spend so much of our time playing today.

Designed, in the words of creators Chip Morningstar and F. Randall Farmer, to “support a population of thousands of users in a single shared cyberspace,” Habitat presented “a real-time animated view into an online simulated world in which users can communicate, play games, go on adventures, fall in love, get married, get divorced, start businesses, found religions, wage wars, protest against them, and experiment with self-government.” All that happened and more within the service’s virtual reality during its pilot run from 1986 to 1988. The features both cautiously and recklessly implemented by Habitat’s developers, and the feedback they received from its users, laid down the template for all the more advanced graphical online worlds to come.

At the top of the post, you can watch Lucasfilm’s original Habitat promotional video promise a “strange new world where names can change as quickly as events, surprises lurk at every turn, and the keynotes of existence are fantasy and fun,” one where “thousands of avatars, each controlled by a different human, can converge to shape an imaginary society.” (All performed, the narrator notes, “with the cooperation of a huge mainframe computer in Virginia.”) The form this society eventually took impressed Habitat’s creators as much as anyone, as Farmer writes in his “Habitat Anecdotes” from 1988, an examination of the most memorable happenings and phenomena among its users.

Farmer found he could group those users into five now-familiar categories: the Passives (who “want to ‘be entertained’ with no effort, like watching TV”), the Active (whose “biggest problem is overspending”), the Motivators (the most valuable users, for they “understand that Habitat is what they make of it”), the Caretakers (employees who “help the new users, control personal conflicts, record bugs” and so on), and the Geek Gods (the virtual world’s all-powerful administrators). Sometimes everyone got along smoothly, and sometimes — inevitably, given that everyone had to define the properties of this brand new medium even as they experienced it — they didn’t.

“At first, during early testing, we found out that people were taking stuff out of others’ hands and shooting people in their own homes,” Farmer writes. Later, a Greek Orthodox Minister opened Habitat’s first church, but “I had to eventually put a lock on the Church’s front door because every time he decorated (with flowers), someone would steal and pawn them while he was not logged in!” This citizen-governed virtual society eventually elected a sheriff from among its users, though the designers could never quite decide what powers to grant him. Other surprisingly “real world” institutions developed, including a newspaper whose user-publisher “tirelessly spent 20–40 hours a week composing a 20, 30, 40 or even 50 page tabloid containing the latest news, events, rumors, and even fictional articles.”

Though developing this then-advanced software for “the ludicrous Commodore 64” posed a serious technical challenge, write Farmer and Morningstar in their 1990 paper “The Lessons of Lucasfilm’s Habitat,” the real work began when the users logged on. All the avatars needed houses, “organized into towns and cities with associated traffic arteries and shopping and recreational areas” with “wilderness areas between the towns so that everyone would not be jammed together into the same place.” Most of all, they needed interesting places to visit, “and since they can’t all be in the same place at the same time, they needed a lot of interesting places to visit. [ … ] Each of those houses, towns, roads, shops, forests, theaters, arenas, and other places is a distinct entity that someone needs to design and create. Attempting to play the role of omniscient central planners, we were swamped.”

All this, the creators discovered, required them to stop thinking like the engineers and game designers they were, giving up all hope of rigorous central planning and world-building in favor of figuring out the tricker problem of how, “like the cruise director on an ocean voyage,” to make Habitat fun for everyone. Farmer faces that question again today, having launched the open-source NeoHabitat project earlier this year with the aim of reviving the Habitat world for the 21st century. As much progress as graphical multiplayer online games have made in the past thirty years, the conclusion Farmer and Morningstar reached after their experience creating the first one holds as true as ever: “Cyberspace may indeed change humanity, but only if it begins with humanity as it really is.”

Related Content:

Free: Play 2,400 Vintage Computer Games in Your Web Browser

Long Live Glitch! The Art & Code from the Game Now Released into the Public Domain

Based in Seoul, Colin Marshall writes and broadcasts on cities and culture. He’s at work on a book about Los Angeles, A Los Angeles Primer, the video series The City in Cinema, the crowdfunded journalism project Where Is the City of the Future?, and the Los Angeles Review of Books’ Korea Blog. Follow him on Twitter at @colinmarshall or on Facebook.

Read More...Huge Hands Rise Out of Venice’s Waters to Support the City Threatened by Climate Change: A Poignant New Sculpture

Upon arriving in Venice in the late 1930s, columnist and Algonquin Round Table regular Robert Benchley immediately sent a telegram back home to America: “Streets full of water. Please advise.” The line has taken its place in the canon of American humor, but in more recent times the image of water-filled streets — unintentionally water-filled streets, that is — has arisen most often in the conversation about climate change. Some of the potential disaster scenarios envision every major coastal city on Earth eventually turning into a kind of Venice, albeit a much less pleasant version thereof.

And so what better place than the one that hosts perhaps the world’s best known art exhibition, the Venice Biennale, to express climate-change anxiety in the form of public sculpture? “Venice is known for its gondolas, canals, and historic bridges,” writes Condé Nast Traveler’s Sebastian Modak, “but visitors will now also be greeted by another, albeit temporary, reminder of the city’s intimate relationship with water: a giant pair of hands reaching out of the Grand Canal and appearing to support the walls of the historic Ca’ Sagredo Hotel.” The piece is called Support, and it’s created by Barcelona-based Italian sculptor Lorenzo Quinn.

“I have three children, and I’m thinking about their generation and what world we’re going to pass on to them,” Quinn told Mashable’s Maria Gallucci. “I’m worried, I’m very worried.” The hands of his 11-year-old son actually provided the model for the polyurethane-and-resin hands of Support, weighing 5,000 pounds each, that stand on 30-foot pillars at the bottom of the Grand Canal. Modak quotes one of Quinn’s Instagram posts which describes the work as speaking to the people “in a clear, simple and direct way through the innocent hands of a child and it evokes a powerful message, which is that united we can make a stand to curb the climate change that affects us all.”

Those arguing in favor of more aggressive political measures to counteract the effects of climate change have gone to great lengths to point out what forms those effects have so far taken. But the fact that, apart from a stretch of hot summers, few of those effects have yet manifested undeniably in most people’s lives has certainly made their job harder. But nobody who visits Venice during the Biennale could fail to pause before Support, a work whose visual drama demands a reaction that temperature charts or data-filled studies can’t hope to provoke by themselves. And even apart from the issue at hand, as it were, Quinn’s sculpture reminds us that art, even in as deeply historical a setting as Venice, can also keep us thinking about the future.

Related Content:

Global Warming: A Free Course from UChicago Explains Climate Change

132 Years of Global Warming Visualized in 26 Dramatically Animated Seconds

A Song of Our Warming Planet: Cellist Turns 130 Years of Climate Change Data into Music

How Climate Change Is Threatening Your Daily Cup of Coffee

Frank Capra’s Science Film The Unchained Goddess Warns of Climate Change in 1958

Watch Episode 1 of Years of Living Dangerously, The New Showtime Series on Climate Change

Based in Seoul, Colin Marshall writes and broadcasts on cities and culture. He’s at work on a book about Los Angeles, A Los Angeles Primer, the video series The City in Cinema, the crowdfunded journalism project Where Is the City of the Future?, and the Los Angeles Review of Books’ Korea Blog. Follow him on Twitter at @colinmarshall or on Facebook.

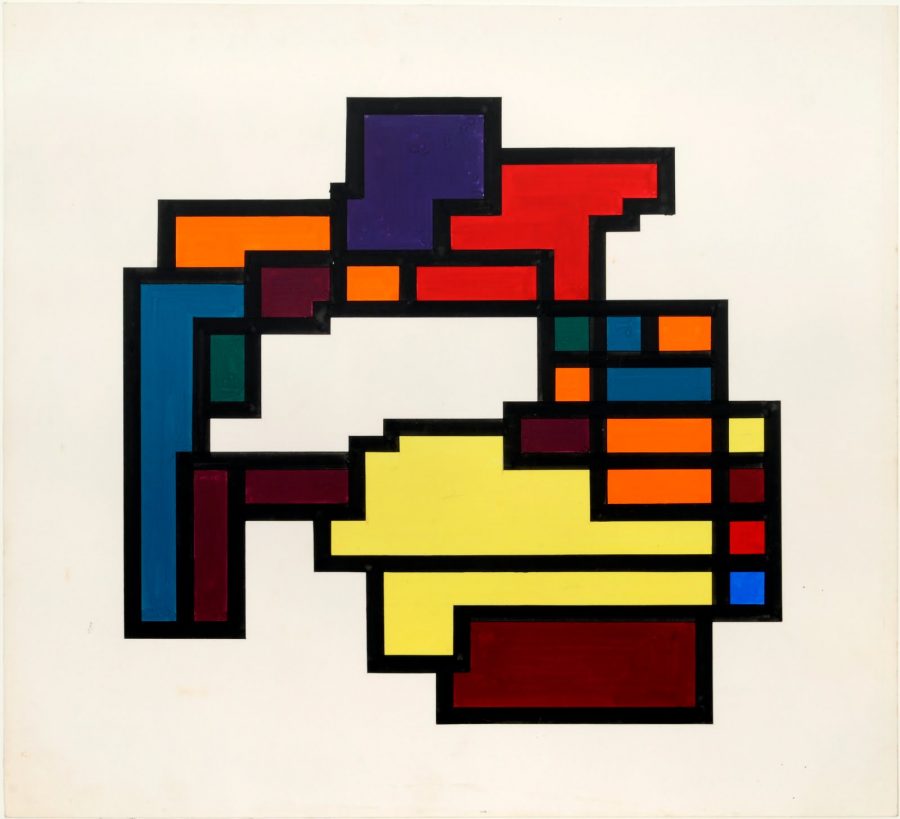

Read More...Japanese Computer Artist Makes “Digital Mondrians” in 1964: When Giant Mainframe Computers Were First Used to Create Art

In the 21st century, most of us have tried our hand at making some kind of digital art or another — even if only touching up cellphone photos of ourselves — but imagine the task of producing it 50 years ago. To make digital art before the world had barely heard the term “digital” required access to a mainframe computer, those hugely expensive hulks that filled rooms and printed out reams and reams of paper data, and the considerable technical know-how to operate it.

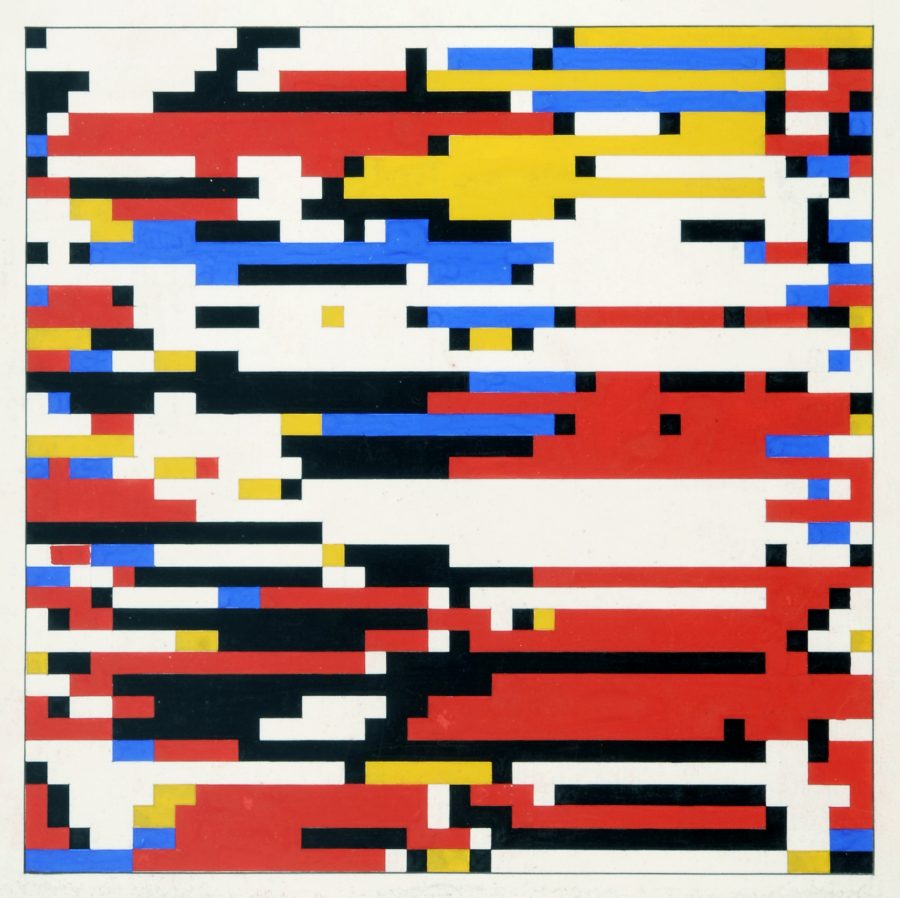

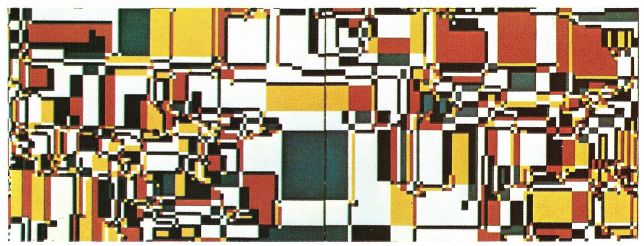

But the achievement also, to go by the very early example of Hiroshi Kawano, required a background in philosophy. A graduate of the University of Tokyo majoring in aesthetics and the philosophy of science before becoming a research assistant at that school and then a lecturer at the Tokyo Metropolitan College of Air-Technology, Kawano marshaled his knowledge and experience to create these “digital Mondrians,” so described because of their computer-generated resemblance to that Dutch painter’s most rigorously angular, solidly colored work.

Kawano had drawn inspiration, according to a Deutsche Welle article on his donation of his archives to Germany’s Center for Media Art, from “the writings of the German philosopher Max Bense, who proposed (among other things) the idea of measuring beauty using scientific rules. At the same time, Kawano heard that scientists were using computers to create music. Putting the two together, he decided to explore the possibility of using a computer to program beauty.”

Doing so required “writing programs in complex computer languages, then laboriously punching these programs into hundreds of cards before feeding them into the machine.” And “while the design of his works produced during the 1960s might look simple — they’re not. They are the result of complex mathematical algorithms programmed so that, although Kawano sets the rules for how the picture could look, he can’t determine exactly what will appear on the printer.”

Just before Kawano passed away in 2012, the ZKM (or Center for Art and Media Karlsruhe), celebrated his pioneering digital art with the exhibition “The Philosopher at the Computer,” some of which you can see in this German-language video clip. “The retrospective emphasizes Kawano’s special role in the circle of pioneers in ‘computer art,’ ” says its introduction. “He was neither artist, who discovered the computer as a new production medium and theme, nor engineer who came to art via the new machine, but a philosopher, who left his desk for the computer center to experiment with theoretical models.”

Can computers create art? Can they even be used to create art? These questions now have practically obvious answers in the affirmative, but back in 1964 when Kawano produced the first of these pieces, working through trial and error with the advice of the curious staff of his university’s computer center, the questions must have sounded impossibly philosophical. Today, writes Overhead Compartment’s Claudio Rivera, Kawano’s digital Mondrians “suggest themselves as an oddly ephemeral transition in the nexus of technology and art. The familiar colors and forms are flash-frozen in crystalline pixelation, almost as if seized up in the final, overheated throes of a suddenly-too-old computer.”

Related Content:

Andy Warhol’s Lost Computer Art Found on 30-Year-Old Floppy Disks

Watch the Dutch Paint “the Largest Mondrian Painting in the World”

Based in Seoul, Colin Marshall writes and broadcasts on cities and culture. He’s at work on a book about Los Angeles, A Los Angeles Primer, the video series The City in Cinema, the crowdfunded journalism project Where Is the City of the Future?, and the Los Angeles Review of Books’ Korea Blog. Follow him on Twitter at @colinmarshall or on Facebook.

Read More...